Bootstrapping is a statistical procedure that utilizes resampling (with replacement) of a sample to infer properties of a wider population.

More often than not, we want to understand the properties of a population but we only have access to a small sample of that population. Sometimes, we are unable to gather more data because it is too expensive, too time consuming, or just not possible. When this is the situation, we must use the sample we already have in a clever way to learn about the characteristics of the population we are interested in. In comes the bootstrap method! The whole idea of bootstrapping is to randomly resample (with replacement) our existing sample so we in effect have more "samples" to work with. These resamples can be used to estimate confidence intervals (which will be the focus of this blog post), reduce biases, perform hypothesis tests, and more. With bootstrapping, we are quite literally pulling our data up by its bootstraps. Let's take a look at how it works.

How does it work?

- Take n repeated random samples, with replacement, from the given dataset. These are called "resamples" and should be the same size as the original sample.

- Calculate the statistic of interest for each of these resamples, e.g. mean, median, standard deviation, etc.

- Now you that you have a distribution of n different estimates of the statistic of interest, you can calculate the confidence interval on that statistic to determine its variability.

You might be wondering why exactly this works and how this method allows us to understand the properties of the population. Basically, bootstrapping works by treating the distribution from the resamples as a reasonable approximation of the true probability distribution. Also, the variability of statistics calculated from the original sample is approximated well by that of each resample.

The basic idea is that inferences made from the resampled data is a good proxy for inferences about the population itself. Check out Bradley Efron's paper if you are interested in diving into this reasoning deeper.

Worked example with Python

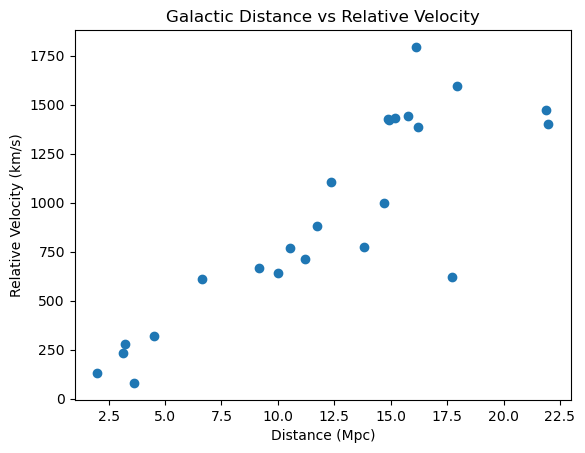

In our Python example we will use data from the Hubble Space Telescope. This data contains distances and velocities of 24 galaxies containing Cepheid stars, from the Hubble space telescope key project to measure the Hubble Constant.

The data contains three columns:

- Galaxy: A factor label identifying the galaxy

- y: The galaxy’s relative velocity measured in kilometers/second (km/s)

- x: The galaxy’s distance from Earth measured in Megaparsecs (Mpc) (Note: 1 Mpc = 3.09e13 km)

We can use this data to estimate the Hubble Constant, \(\beta\), and the age of the universe, \(\beta^{-1}\), with the following:

\[\begin{align} y = \beta x \end{align}\]

Here I’ll give some quick scientific context as to what the Hubble Constant is and how it can be used to estimate the age of the universe.

According to the standard Big Bang model, the universe is expanding uniformly according to Hubble’s Law:

\[\begin{align} v = H_0 d \end{align}\]

where \(v\) is apparent velocity of the galaxy and \(d\) is the distance to the galaxy. \(v\) and \(d\) are related linearly by \(H_0\), which we call the Hubble Constant. These variables, \(v, d, H_0\), are the standard astrophysical notations for velocity, distance, and the Hubble Constant. In terms of the variables given in our dataset, Hubble’s Law is:

\[\begin{align} y = \beta x \end{align}\]

where \(y\) is the relative velocity of the galaxy, \(\beta\) is the Hubble Constant, and \(x\) is the distance to the galaxy. From now on, I’ll use \(y, x,\) and \(\beta\) to denote galactic velocity, distance, and the Hubble Constant.

Now, how does the Hubble Constant help us determine the age of the universe? The inverse of the Hubble Constant, \(\beta ^{-1}\), gives the approximate age of the universe. This might not be immediately clear, so let’s take a look at the units of our quantities to see why it’s \(\beta ^{-1}\). \(y\) is measured in the units km/s, or distance over time. \(x\) is measured in units of Mpc, or distance. Writing this out we see:

\[\begin{align} \frac{distance}{time} = [\beta] distance \end{align}\]

Here, I’m using square brackets around \(\beta\) as a placeholer for the dimensions of \(\beta\).

Now, we can determine the units of \(\beta\) by comparing the units on the left side of the equation to the units on the right hand side of the equation, and figuring out what units \(\beta\) must take to make the dimensions of the two sides of the equation equal. From this, we can see that the units of the Hubble Constant, \(\beta\), must be \(\frac{1}{time}\):

\[\begin{align} \frac{distance}{time} = \frac{1}{time} distance \end{align}\]

\[\begin{align} \frac{distance}{time} = \frac{distance}{time} \end{align}\]

We now see the units of the Hubble Constant are \(\frac{1}{time}\), so taking the inverse of \(\beta\) will give units of time, and thus an estimate of the age of the universe (because the unit of age is time).

The next question that comes to mind is how do we determine the Hubble Constant from our velocity and distance data? Because these quantities are related linearly by Hubble’s Law, the answer is simply linear regression. We could perform a linear regression on the 24 velocity-distance pairs we have in our dataset and read off the linear coefficient to get a value for \(\beta\), but we can’t say much about how well the true value of \(\beta\) is constrained.

We could submit a proposal to the Hubble Telescope team to apply for more telescope time to measure the velocities of and distances to more galaxies to get more samples to analyze, but that would be expensive and time consuming and there’s no guarantee that our proposal would be accepted. So we turn to bootstrapping!

Now, let’s work through estimating a 95% confidence interval on the value of the Hubble Constant with Python.

First, let’s import the required libraries for our analysis:

import pandas as pd

import numpy as np

from sklearn.linear_model import LinearRegression

from scipy.stats import bootstrap

import matplotlib.pyplot as plt

Next, let’s import the data and look at the first 5 rows using the read_csv() and head() functions from pandas. Click here to download the data if you would like to follow along.

data = pd.read_csv("hubble.csv")

data.head()

Galaxy y x

0 NGC0300 133 2.00

1 NGC0925 664 9.16

2 NGC1326A 1794 16.14

3 NGC1365 1594 17.95

4 NGC1425 1473 21.88

We can see the three columns, Galaxy, y, and x. Again, Galaxy is the galaxy identifier/name, y is the relative velocity of the galaxy, and x is the distance to the galaxy. Let’s plot our data to investigate the relationship between distance and velocity.

# Extract velocity, y, and distance, x, from our imported data

y = data["y"]

x = data["x"]

# Plot x vs y.

plt.scatter(x,y)

plt.title("Galactic Distance vs Relative Velocity")

plt.xlabel("Distance (Mpc)")

plt.ylabel("Relative Velocity (km/s)")

plt.show()

We can see that the relationship between relative velocity and distance is roughly linear.

Now our goal is to bootstrap this data to estimate a 95% confidence interval on the Hubble Constant. Recall bootstrapping requires that we resample the data many times so that we get a distribution of a particular statistic, in this case the Hubble Constant, that we can use to estimate a confidence interval on that statistic. Since we will resample the data many many times, let’s define a function that creates a resample of the data that we can call later in a loop:

def resample(data, seed):

'''

Creates a resample of the provided data that is the same length as the provided data

'''

import random

random.seed(seed)

res = random.choices(data, k=len(data))

return res

Our resample() function takes in data that it will resample from. It also takes in seed that will set a psuedorandom seed; this is purely for reproducibility of this example.

Now let’s set up our data so that we can feed it into our resample() function. Our data contains velocity-distance pairs: specific velocities correspond to specific distances. So, we want to randomly resample pairs of velocity and distance, we don’t want to randomly sample velocity then randomly sample distance separately. Let’s use Python’s zip() function to “zip” our corresponding velocities and distances together, then resample the “zipped” pairs. This will ensure that we maintain the correct velocity-distance pairs throughout our bootstrap analysis.

# Extract the distance, x, and velocity, y, values from our pandas dataframe

distances = data["x"].values

velocities = data["y"].values

# Zip our distances and velocities together and store the zipped pairs as a list

dist_vel_pairs = list(zip(distances, velocities))

# Print out the first 5 zipped distance-velocity pairs

print(dist_vel_pairs[:5])

[(2.0, 133), (9.16, 664), (16.14, 1794), (17.95, 1594), (21.88, 1473)]

In the above output, we can see the first 5 “zipped” distance-velocity pairs. Each pair is a tuple containing the distance in index 0, and the corresponding velocity in index 1. Now let’s generate 10,000 resamples of distance-velocity pairs using our resample() function in a list comprehension. After generating the 10,000 resamples, let’s use a for loop to perform a linear regression on each of them to get a distribution of 10,000 Hubble Constant, \(\beta\), estimates. Let’s use the LinearRegression() function from the sklearn.linear_model module to perform our linear regressions. In the argument of LinearRegression() we set fit_intercept=False so the regression does not fit an intercept coefficient. This is because there is no intercept in Hubble’s Law.

# Generate 10,000 resamples with a list comprehension

boot_resamples = [resample(dist_vel_pairs, val) for val in range(10000)]

# Calculate beta from linear regression for each of the 10,000 resamples and store them in a list called "betas"

betas = []

for res in boot_resamples:

# "Unzip" the resampled pairs to separate x and y so we can use them in the LinearRegression() function

dist_unzipped, vel_unzipped = zip(*res)

dist_unzipped = np.array(dist_unzipped).reshape((-1, 1))

# Find linear coefficient beta for this resample and append it to a list of betas

betas.append(LinearRegression(fit_intercept=False).fit(dist_unzipped, vel_unzipped).coef_[0])

# Print out the first 5 beta values

print(betas[:5])

[70.49289924780366, 86.37984957925575, 75.39193217270235, 78.0888441398601, 75.35740068419938]

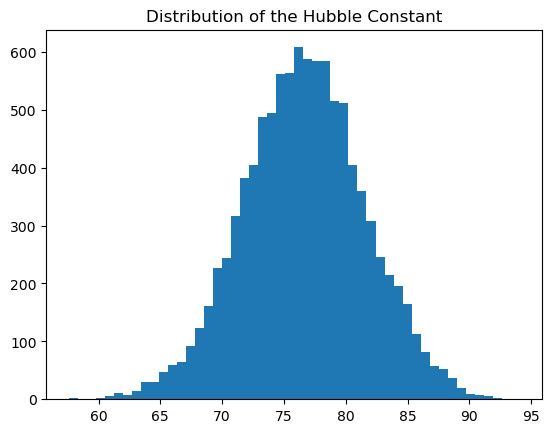

This may take a minute to run because we are performing 10,000 linear regressions. At the end I printed out the first 5 estimates of the Hubble Constant just so we can see what some of them look like. We do see some variability in the values! Let’s now take a look at the distribution of Hubble Constants we found with a histogram.

# Distribution of betas (hubble constants).

plt.clf()

plt.hist(betas, bins=50)

plt.title("Distribution of the Hubble Constant")

plt.show()

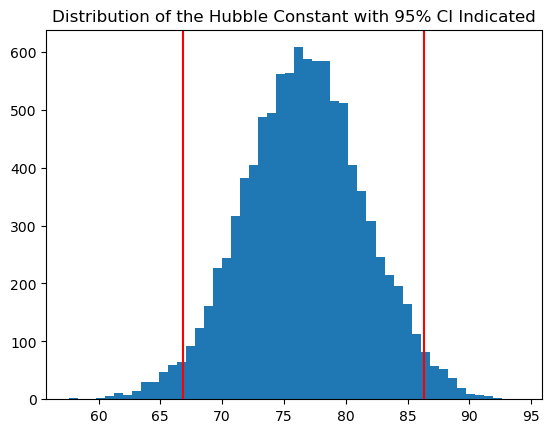

Now that we have many possible values for the Hubble Constant, \(\beta\), we can calculate the 95% confidence interval on this distribution. This will serve as an approximate confidence interval on the true value of the Hubble Constant. Let’s use the numpy.percentile() function to calculate our confidence interval.

# Calculate the values of 2.5th and 97.5th percentiles of our distribution of betas

conf_interval = np.percentile(betas, [2.5,97.5])

print(conf_interval)

[66.86548795 86.30720865]

We find the boundaries of our 95% confidence interval are about 66.9 and about 86.3. This informs the uncertainty in our estimate. The process of sampling data and calculating 95% confidence intervals captures the true value we’re trying to estimate about 95% of the time. In this case we’re confident the true time is between 66.9 and 86.3 seconds\(^{-1}\).

Let’s replot our histogram with the confidence interval marked by vertical lines:

plt.clf()

plt.hist(betas, bins=50);

plt.title("Distribution of the Hubble Constant with 95% CI Indicated")

plt.axvline(conf_interval[0], color="red")

plt.axvline(conf_interval[1], color="red")

plt.show()

Now let’s take a look at the age of the universe from our estimates. The universe is currently estimated to be about 13.8 billion years old, let’s see how well our estimates match up to this value. When doing our calculations, we must keep our units consistent, so let’s convert megaparsecs (Mpc) to kilometers (km) and seconds (s) to years (yr).

The conversion from Mpc to km:

\[\begin{align} 1 \ \text{Mpc} = 3.09\times 10^{19} \ \text{km} \end{align}\]

The conversion from s to yr:

\[\begin{align} 1 \ \text{s} = 1 \ \text{year} * \frac{365 \ \text{days}}{1 \ \text{year }} * \frac{24 \ \text{hours}}{1 \ \text{day}} * \frac{60 \ \text{minutes}}{1 \ \text{hour}} * \frac{60 \ \text{seconds}}{1 \ \text{minute}} \end{align}\]

\[\begin{align} 1 \ \text{s} = 1 \ \text{year} * 365 * 24 * 60^2 \end{align}\]

Let’s use these conversion factors to convert our Hubble Constant confidence interval to a confidence interval on the age of the universe:

# Calulation of 95% confidence interval for the age of the universe

conf_interval_age = 3.09e19/(conf_interval * (365*24*60**2))

conf_interval_age

array([1.46537863e+10, 1.13528474e+10])

From this calculation, we’re 95% confident the age of the universe is between about 11.4 billion and 14.8 billion years old. That’s spot on with the current estimation of the age of the universe, 13.8 billion years! Very cool.

Summary

We used the bootstrap method to randomly resample (with replacement) our 24 galactic relative velocity and distance datapoints 10,000 times, estimate the Hubble Constant by performing a linear regression for each of those resamples to get a distribution of values, and calculate a 95% confidence interval on the distribution of the Hubble Constant. We then used this confidence interval to calculate the confidence interval on the age of the universe. These confidence intervals serve as good proxies for that of the true Hubble Constant/age of the universe if we could calculate these values using relative velocity and distance data from the entire population of galaxies in the universe.

Python session details

Python version: 3.9.6

numpy version: 1.22.2

pandas version: 1.4.0

matplotlib version: 3.5.1

sklearn version: 1.0.2

References

- B. Efron. (1979). “Bootstrap Methods: Another Look at the Jackknife.” The Annals of Statistics, 7(1) 1-26. https://doi.org/10.1214/aos/1176344552

- Freedman, W. L., Madore, B. F., Gibson, B. K., Ferrarese, L., Kelson, D. D., Sakai, S., Mould, J. R., Kennicutt, Jr., R. C., Ford, H. C., Graham, J. A., Huchra, J. P., Hughes, S. M., Illingworth, G. D., Macri, L. M., & Stetson, P. B. (2001). Final Results from the Hubble Space Telescope Key Project to Measure the Hubble Constant. The Astrophysical Journal, 553(1), 47–72. https://doi.org/10.1086/320638

- Hubble Space Telescope Data. R. (n.d.). Retrieved from https://search.r-project.org/CRAN/refmans/gamair/html/hubble.html

- Wikimedia Foundation. (2023, March 27). Bootstrapping (statistics). Wikipedia. Retrieved from https://en.wikipedia.org/wiki/Bootstrapping_(statistics)

Samantha Lomuscio

StatLab Associate

University of Virginia Library

March 28, 2023

For questions or clarifications regarding this article, contact statlab@virginia.edu.

View the entire collection of UVA Library StatLab articles, or learn how to cite.